AIR QUALITY ENGINEERS

U.C. Technologies is een uitvoerend ingenieursbureau en systems integrator dat duurzame oplossingen realiseert op het gebied luchtkwaliteit voor binnen- en buiten-milieu. Met u zoeken we naar milieu-installaties die voor uw bedrijf economisch verantwoord zijn, alle betrokkenen optimaal ten dienste staan en de omgeving minimaal belast. Moderne ideeën over: energiezuinig, energie terugwinning, cradle to cradle, internet of things, Big Data, Clean Production, Smart Industry, Lean, circulaire industrie worden zoveel mogelijk verwerkt in onze installaties.

De milieu-installaties die U.C. Technologies bouwt zijn meetsystemen om de gewenste luchtkwaliteit waar dan ook te realiseren en/of te bewaken. De kennis waarvan wij als ingenieursbureau gebruikmaken is een combinatie van werktuigbouwkunde, elektrotechniek, natuurkunde, chemie, biologie, installatie en regeltechniek. Met dit inzicht bedenken wij duurzame totaaloplossingen op het gebied van thermodynamica, integrale veiligheid, klimaatbeheersing en industriële ventilatie. Wij belichten de problematiek van diverse kanten daarbij houdt U.C. Technologies rekening met ontwikkelingen in de werkomgeving, de markt, wet- en regelgeving en de directe omgeving van het gebouw.

BENT U OP ZOEK NAAR EEN DUURZAAM LUCHTKWALITEITSYSTEEM VOOR BINNEN- EN BUITENMILIEU?

INGENIEURSBUREAU VOOR LUCHTKWALITEIT IN BINNEN- EN BUITEN-MILIEU

Er zijn tal van milieus waar het bewaken van waarden voor de luchtkwaliteit van belang kunnen zijn:

- De inhoud van transportcontainers bij inklaring,

- Binnen toegangspoorten ten behoeve van toegangscontrole,

- Iin productiestraten ter bescherming , maar ook als kwaliteitscontrole van het product,

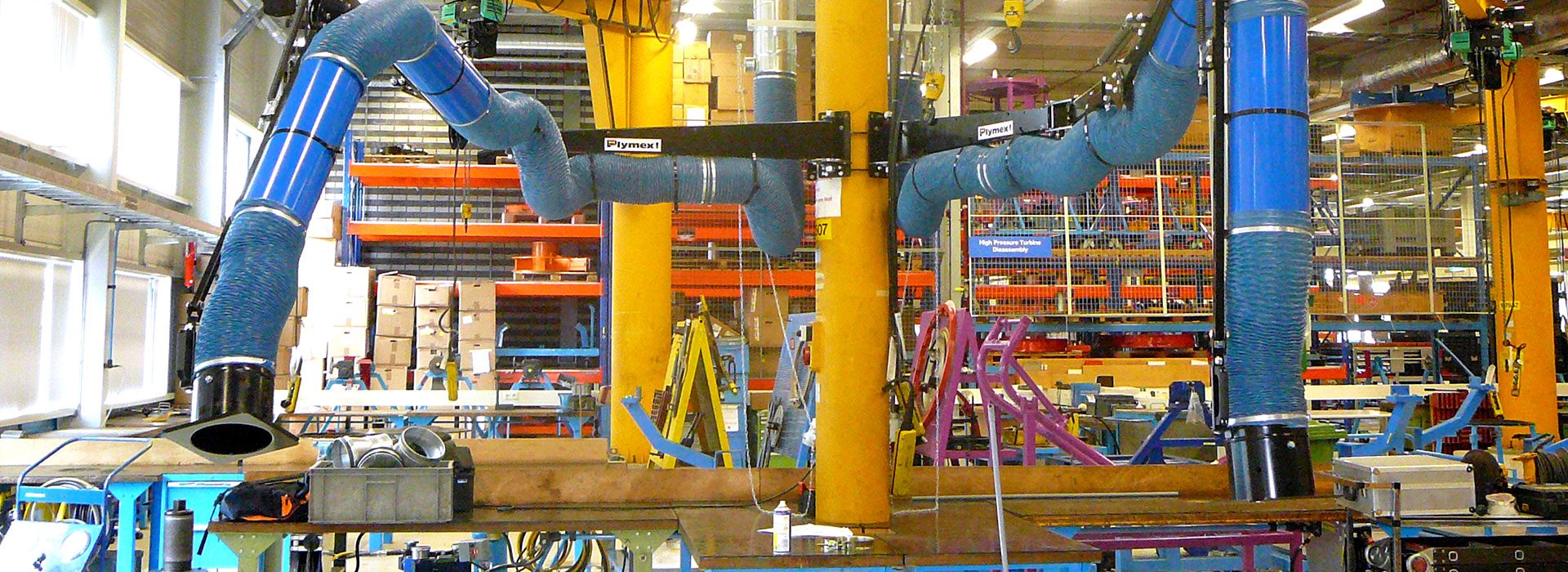

- Op de werkplek ter bescherming van de medewerker tegen irritaties, allergische reacties, acute ziekteverschijnselen, of chronische ziektes als COPD, astma en kanker,

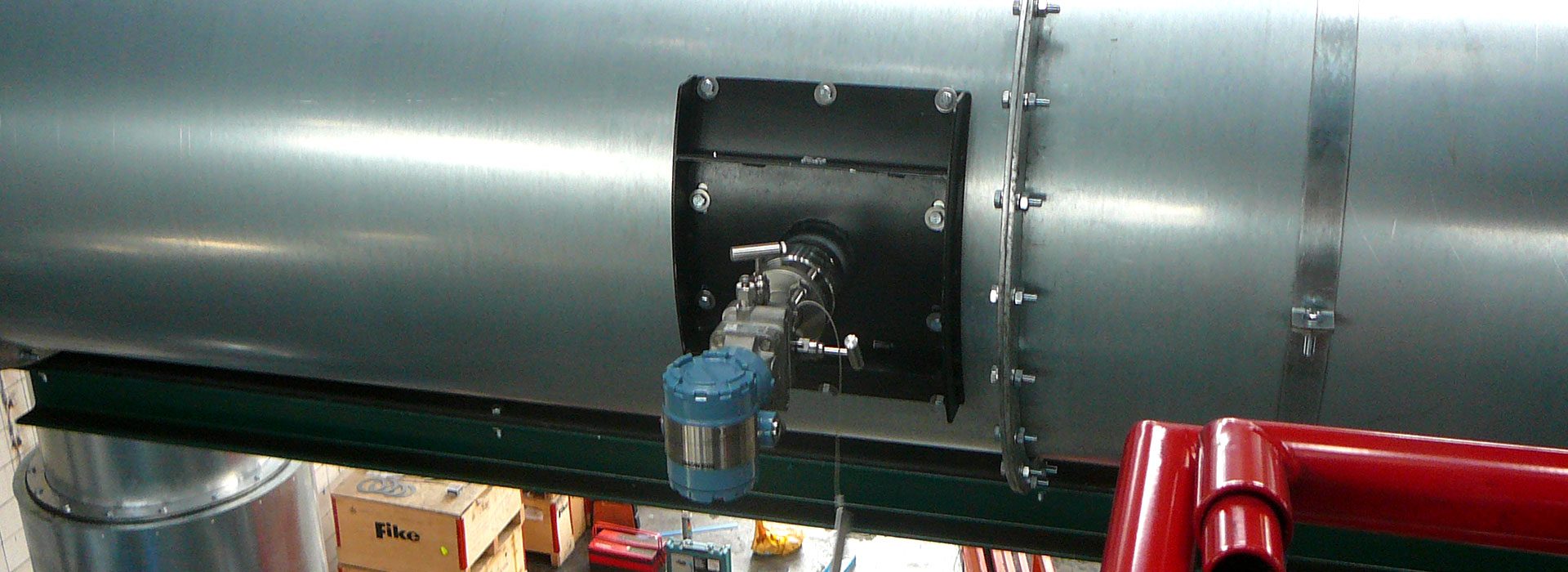

- Als bescherming tegen brand- en explosieschade,

- Om verspreiding van ziektekiemen te voorkomen,

- Als actie tegen stankoverlast,

- Een manier om vervuiling van het buitenmilieu te voorkomen.

Waar mogelijk passen wij continue metingen toe om het milieu te kwalificeren. In andere gevallen voeren we op gezette tijden gecertificeerde metingen uit om de luchtcondities optimaal te bewaken. Detectiemethoden op basis van veranderingen in fysische, chemische, biologische eigenschappen, gas chromatografie, massaspectometrie of IRspectrometrie worden door ons ingezet om onze milieu-systemen effectief aan te sturen. Voor onze ontwerpen putten we uit alle technisch mogelijke reinigingsmethoden en beperken wij ons niet in de keuze tot standaard verkrijgbare reinigingssystemen. Als ingenieursbureau zijn wij ook merkonafhankelijk.

Met deze instelling komen we regelmatig tot succesvolle onconventionele oplossingen als bijvoorbeeld:

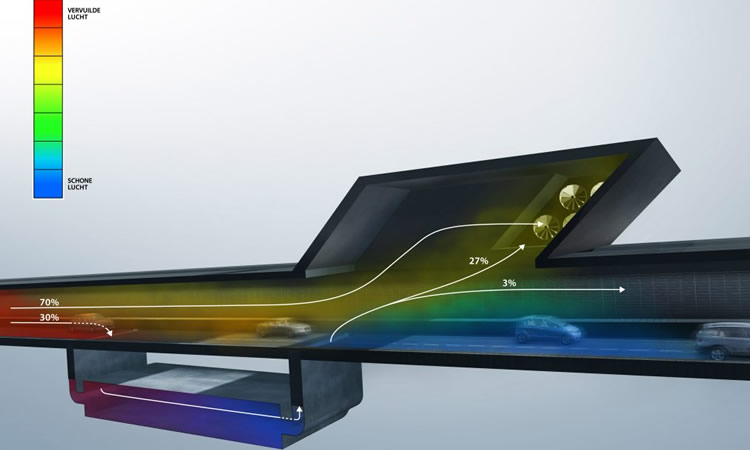

- HD clean tunnel concept ®

- Macbuster ®

- Engine Vapour Extractor (EVE) ®

- Aerosol Filterblussysteem ®

- Novodek ® snijtafels

- Elektronische luchtkwaliteit bewaking

RUIMTEVENTILATIE VOLGENS DE MODERNSTE CONCEPTEN

Sinds haar oprichting heeft U.C. Technologies vele meettechnieken, afzuigelementen en reinigingsinstallaties ontwikkeld, waarvan sommige gepatenteerd. Verschillende componenten zijn in de loop van de tijd dankzij serieproductie nu goedkoper door andere leveranciers op de markt gebracht. Onze systeemoplossingen bestaan dan ook zoveel mogelijk uit standaard maar ook klant specifieke componenten. Indien gewenst maken we ook gebruik van hergebruikte componenten. Uit duurzaamheids-overwegingen proberen wij onze componenten zoveel mogelijk te hergebruiken. Voor u levert dit een kostenbesparing. Zo kunnen wij u de maximale meerwaarde van een aanvraag bij U.C. Technologies bieden tegen een korte levertijd en een concurrerende prijs.

Ook bij inkoop van losse componenten zijn wij u graag van dienst, hoewel minder concurrerend, bieden wij u de beste service.